Building and Iterating a Data-Driven Model to Predict and Evaluate Website Performance

Executive Summary

This project focuses on developing a forecasting system to predict and evaluate website performance over time.

Rather than treating forecasting as a one-off modelling exercise, I approached it as an iterative system to track predictions against actual outcomes, identify where the model fails, and refine it accordingly.

By combining time-series forecasting with ongoing performance evaluation, the project highlights how predictive models behave in real-world conditions, where accuracy is not static and assumptions regularly break down.

Business Problem

Understanding website performance is often limited to retrospective metrics:

- page views

- users

- engagement

While useful, these metrics do not support forward-looking decision-making. The key challenge was:

How can website performance be forecasted in a way that is both useful and adaptable over time?

Specific issues included:

- No structured way to predict future performance

- Limited visibility into how accurate predictions are

- No feedback loop to improve forecasting over time

This creates a gap between measuring performance and anticipating it.

Methodology

This project was developed iteratively across multiple stages (as documented in the blog series), with each phase refining both the model and the evaluation approach.

1. Metric Definition & Data Structuring (Post 1)

The first step was establishing a consistent framework for measurement. This included:

- defining key website metrics (e.g. visits, users, engagement)

- structuring data for time-series analysis

- ensuring consistency in how performance is tracked over time

This stage highlighted the importance of good forecasting starting with good definitions.

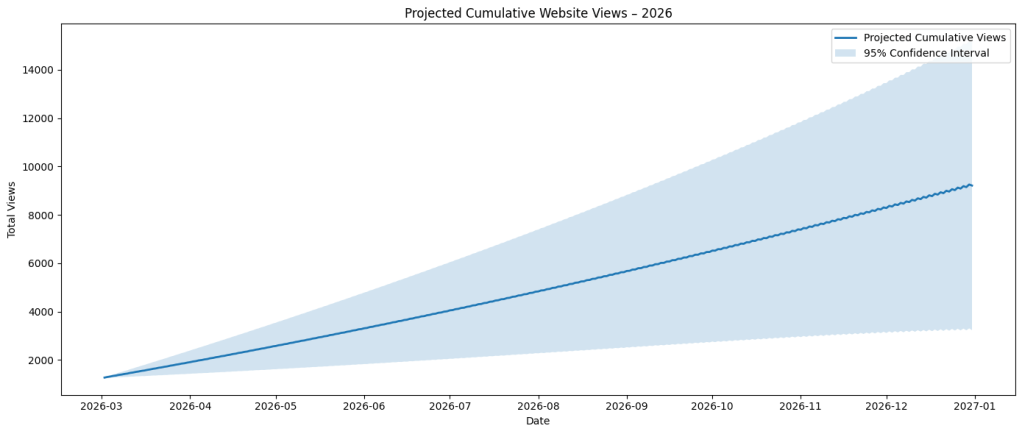

2. Initial Forecast Development (Post 1–2)

A baseline forecasting model was introduced to generate predictions for future website performance. At this stage, the focus was not on model complexity, but on:

- establishing a working forecast

- creating a reference point for evaluation

This provided a foundation for comparing:

- expected performance

- actual outcomes

3. Performance Tracking & Evaluation (Post 2–3)

Rather than stopping at prediction, the project tracked:

- forecasted values

- actual website performance

- variance between the two

This enabled analysis of:

- when predictions were accurate

- when they failed

- how error evolved over time

4. Iterative Refinement (Post 3 onwards)

Insights from prediction errors were used to refine the approach:

- identifying consistent under/overestimation

- recognising patterns where the model breaks down

- adjusting assumptions and inputs

This transforms the model from a static prediction to an adaptive system.

Skills

- Time-series analysis and forecasting

- Data structuring and metric design

- Performance evaluation and error analysis

- Iterative model development

- Translating analytical outputs into insights

Results & Insights

Several key patterns emerged across the project:

1. Forecast accuracy is not stable

Predictions degrade over time, particularly when:

- underlying behaviour changes

- external factors are not captured

2. Error is structured, not random

Forecast inaccuracies tend to cluster:

- around specific time periods

- during shifts in user behaviour

3. Simpler models highlight weaknesses faster

Using a baseline model made it easier to:

- identify where assumptions fail

- understand limitations clearly

4. Tracking performance is as important as predicting it

The most valuable insight came from comparing predictions vs reality and not the prediction itself.

Business Recommendation

If applied in a broader analytics context:

- Treat forecasting as an ongoing system and not a one-off output

- Track forecast accuracy as a core KPI; regularly compare predictions vs actuals

- Continuously refine models based on error and use failure as an input, not a problem

Impact

This project demonstrates a shift from static reporting to forward-looking, adaptive analysis. It enables:

- better expectation setting

- improved decision-making under uncertainty

- clearer understanding of model limitations

Next Steps

As the blog series continues, the project will evolve to include:

- more advanced forecasting techniques

- incorporation of external variables (seasonality, campaigns, trends)

- scenario-based forecasting (best/worst case)

- improved visualisation of prediction vs actual performance

Project Development Timeline

Part I: Metric design & initial model